As part of my PhD research, I applied natural language processing and machine learning to classify comments in the drafts of the research papers that students in my lab had previously written. Here is the journal article that I finally published. I know there is a paywall. If you want to read the entire article, leave a comment and I’ll email you a copy.

At the time, I wrote my own code to split data into test and validation sets, to clean up and edit the data, to replace fields, etc. Basically, I wrote custom Python scripts to manipulate the data. I wasn’t using Jupyter Notebooks either, just the old plain IDLE. I wish I had known about pandas then.

For machine learning, I used a simple but powerful java-based library for support vectors called LIBSVM. Then I’d paste all the results in Excel to analyze everything.

Enter Jupyter Notebooks

Sorry if the above sounded like jargon. If you are getting started with machine learning, chances are you are following tutorials that use Jupyter Notebooks.

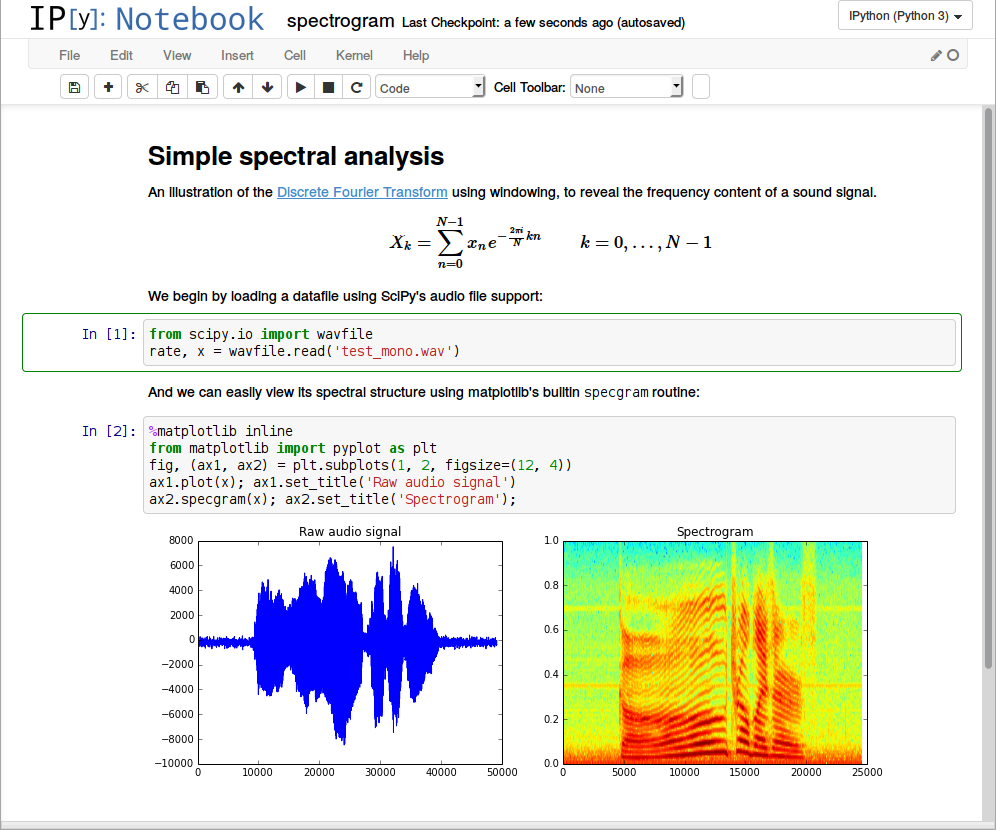

The Jupyter Notebook is an open source web application that you can use to create and share documents that contain live code, equations, visualizations, and text.

Cloud-based Free Notebooks

You can install and run Jupyter on your local machine, but there are a number of free “live” notebooks that you can play around with without the need to set up anything on your local machine, at least until you are familiar with all the concepts.

I got the following list from this website: dataschool.io, check it out for the detailed comparison.

Recommended Courses you can take to get started on Machine Learning

- Andrew Ng’s course on coursera

- Google’s Machine learning crash course

- Other courses on udemy or other websites

Once you’ve built your model and tested it on the Notebook, you may be ready to try deploy it on the internet to see how it world work in a real production environment. I think I will cover this part in the next post. For now, enjoy the Notebooks!

Hi Harriet, I would like to read the journal article that you wrote (https://link.springer.com/article/10.1007/s10639-018-9705-7). Care to email it to me? I will really appreciate. Thanks!

Wow. Kindly email a copy i would like to read the journal